1.00 vs. 1.02 - Does it Matter?

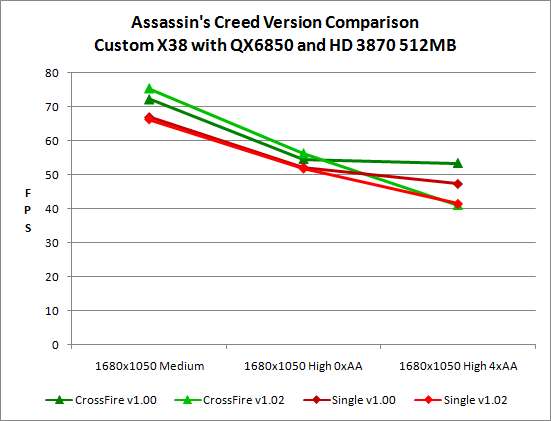

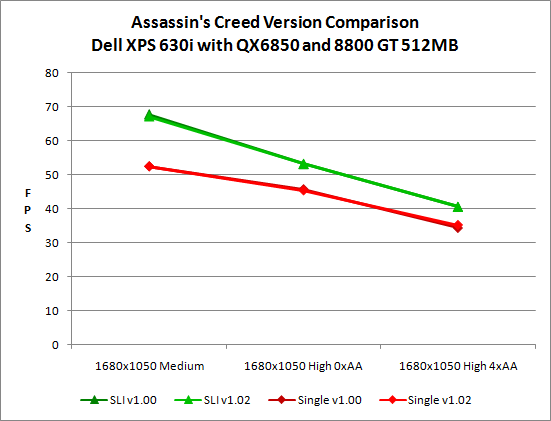

If you read the previous pages, you can probably already guess the answer to this question. The 1.02 patch fixes a few minor errors, and it also removes DirectX 10.1 support. ATI HD 3000 series hardware is the only current graphics solution that supports DX10.1, so barring other changes there shouldn't be a performance difference on NVIDIA hardware. We tested at three settings for this particular scenario: Medium Quality, High Quality, and High Quality with 4xAA.

NVIDIA performance is more or less identical between the two game versions, but we see quite a few changes on the ATI side of things. It's interesting that overall performance appears to improve slightly on ATI hardware with the updated version of the game, outside of anti-aliasing performance.

This is where the waters get a little murky. Why exactly would Ubisoft removes DirectX 10.1 support? There seems to be an implication that it didn't work properly on certain hardware -- presumably lower-end ATI hardware -- but that looks like a pretty weak reason to totally remove the feature. After all, as far as we can tell it only affects anti-aliasing performance, and it's extremely doubtful that anyone with lower-end hardware would be enabling anti-aliasing in the first place. We did notice a few rendering anomalies with version 1.0, but there wasn't anything that should have warranted the complete removal of DirectX 10.1 support. Look at the following image gallery to see a few of the "problems" that cropped up.

In one case, there's an edge that doesn't get anti-aliased on any hardware except ATI HD 3000 with version 1.00 of the game. There may be other edges that also fall into this category, but if so we didn't spot them. The other issue is that periodically ATI hardware experiences a glitch where the "bloom/glare" effect goes crazy. This is clearly a rendering error, but it's not something you encounter regularly in the game. In fact, this error only seems to occur after you first load the game and before you lock onto any targets or use Altaïr's special "eagle vision" -- or one of any number of other graphical effects. In our experience, once any of these other effects have occurred you will no longer see this "glaring" error. In fact, I finished playing AC and never noticed this error; I only discovered it during my benchmarking sessions.

So why did Ubisoft remove DirectX 10.1 support? The official statement reads as follows: "The performance gains seen by players who are currently playing AC with a DX10.1 graphics card are in large part due to the fact that our implementation removes a render pass during post-effect which is costly." An additional render pass is certainly a costly function; what the above statement doesn't clearly state is that DirectX 10.1 allows one fewer rendering pass when running anti-aliasing, and this is a good thing. We contacted AMD/ATI, NVIDIA, and Ubisoft to see if we could get some more clarification on what's going on. Not surprisingly, ATI was the only company willing to talk with us, and even they wouldn't come right out and say exactly what occurred.

Reading between the lines, it seems clear that NVIDIA and Ubisoft reached some sort of agreement where DirectX 10.1 support was pulled with the patch. ATI obviously can't come out and rip on Ubisoft for this decision, because they need to maintain their business relationship. We on the other hand have no such qualms. Money might not have changed hands directly, but as part of NVIDIA's "The Way It's Meant to Be Played" program, it's a safe bet that NVIDIA wasn't happy about seeing DirectX 10.1 support in the game -- particularly when that support caused ATI's hardware to significantly outperform NVIDIA's hardware in certain situations.

Last October at NVIDIA's Editors Day, we had the "opportunity" to hear from several gaming industry professionals about how unimportant DirectX 10.1 was, and how most companies weren't even considering supporting it. Amazingly, even Microsoft was willing to go on stage and state that DirectX 10.1 was only a minor update and not something to worry about. NVIDIA clearly has reasons for supporting that stance, as their current hardware -- and supposedly even their upcoming hardware -- will continue to support only the DirectX 10.0 feature set.

NVIDIA is within their rights to make such a decision, and software developers are likewise entitled to decide whether or not they want to support DirectX 10.1. What we don't like is when other factors stand in the way of using technology, and that seems to be the case here. Ubisoft needs to show that they are not being pressured into removing DX 10.1 support by NVIDIA, and frankly the only way they can do that is to put the support backing in a future patch. It was there once, and it worked well as far as we could determine; bring it back (and let us anti-alias higher resolutions).

32 Comments

View All Comments

Griswold - Monday, June 2, 2008 - link

Thats no excuse. Halo sucked performance and gameplay wise compared to the PC-first titles of then - and that is what matters. In essence, the game is bad when you're used to play that genre on the PC. Same is true for gears of war but that port is lackluster in many more ways.I fell two times for console to PC ports. Never again.

bill3 - Monday, June 2, 2008 - link

The even worst shooter is Resistance on PS3.