AMD Radeon HD 4670: Ruling from Top to Bottom

by Derek Wilson on September 10, 2008 12:00 AM EST- Posted in

- GPUs

When AMD released its Radeon HD 4870 and 4850 the price/performance advantage over NVIDIA at the time was so great that we wondered if it would extend to other GPUs based on the same architecture. Inevitably AMD would offer cost reduced versions of the 4800 series and today we're seeing the first example of that; meet the RV730 XT, otherwise known as the Radeon HD 4670:

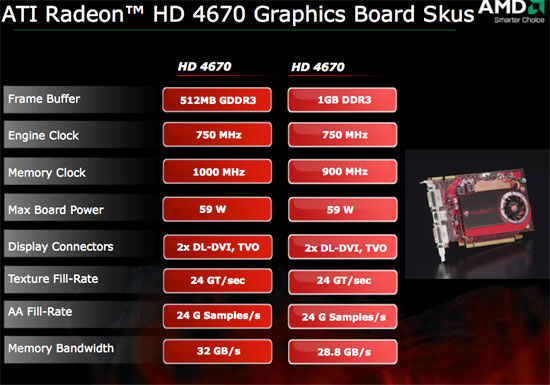

The Radeon HD 4670 is priced at $79, which in the past hasn't really gotten you a very good gaming experience regardless of who made the chip. Today's launch is pretty interesting because the 4670 has the same number of stream processors as the Radeon HD 3870 (320), which at the time of its launch was reasonably competitive in the $180 - $200 range. Let's have a closer look at the 4670's specs:

| ATI Radeon HD 4870 | ATI Radeon HD 4850 | ATI Radeon HD 4670 | ATI Radeon HD 4650 | ATI Radeon HD 3870 | |

| Stream Processors | 800 | 800 | 320 | 320 | 320 |

| Texture Units | 40 | 40 | 32 | 32 | 16 |

| ROPs | 16 | 16 | 8 | 8 | 16 |

| Core Clock | 750MHz | 625MHz | 750MHz | 600MHz | 775MHz+ |

| Memory Clock | 900MHz (3600MHz data rate) GDDR5 | 993MHz (1986MHz data rate) GDDR3 |

1000MHz (2000MHz data rate) GDDR3 or 900MHz (1800MHz data rate) DDR3 |

500MHz (1000MHz data rate) DDR2 | 1125MHz (2250MHz data rate) GDDR3 |

| Memory Bus Width | 256-bit | 256-bit | 128-bit | 128-bit | 256-bit |

| Frame Buffer | 512MB | 512MB | 512MB GDDR3 or 1GB DDR3 | 512MB | 512MB |

| Transistor Count | 956M | 956M | 514M | 514M | 666M |

| Die Size | 260 mm2 | 260 mm2 | 146 mm2 | 146 mm2 | 190 mm2 |

| Manufacturing Process | TSMC 55nm | TSMC 55nm | TSMC 55nm | TSMC 55nm | TSMC 55nm |

| MSRP Price Point | $299 | $199 | $79 | $69 | $199 |

| Current Street Price | $270 | $170 | $80 | N/A |

$110 |

Clock speeds are a bit lower and we've got much less memory bandwidth, but the hardware has some advantages. The RV730 XT is a derivative of the GPU in the 4800 series cards, and it carries over some of the benefits we saw inherent in the architecture changes. Of these, antialiasing saw a major benefit, but we also see changes like increases in cache sizes, texturing power, and z/stencil ability. We won't see performance on par with the 3870 in general, but the 4670 will do some damage in certain situations, especially if AA comes into play.

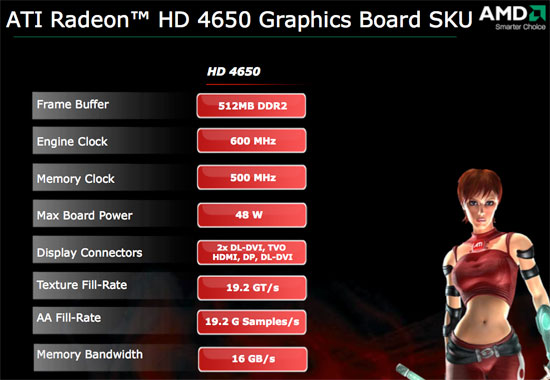

AMD is also announcing (but we're not testing) the Radeon HD 4650 running at a meager 600MHz and using 500MHz DDR2 memory. The 4650 will chop another $10 off the 4670's pricetag.

AMD lists board power of the 4670 and 4650 at 59W and 48W respectively and obviously they're single slot (with no PCIe power required). To make things better, both of them include the same 8-channel LPCM support for HDMI from the 4800 series. We're waiting to sort out some issues with HDCP and our latest test version of PowerDVD Ultra before confirming the support, but we know first hand that it works on the 4800 series and we see no reason that it wouldn't on the 4600 series.

We are quite happy to see AMD pushing it's latest generation technology out across its entire product line. It's great to see new parts making their way into the market rather than a bunch of old cards with slight tweaks and new names. Of course, AMD is fighting back from a disadvantage, so they don't have the luxury of relying on their previous generation hardware to trickle down the same way NVIDIA can. But we certainly hope that AMD continues to follow this sort of trend, as the past couple years have been very hard on the lower end of the spectrum with a huge lag between the introduction of a new architecture and its availability in the mainstream market.

Also of interest is the fact that AMD has added support in the RV730 for 900 MHz DDR3. The move away from GDDR3 toward the currently ramping up and dropping in price system memory solution is quite cool. Let's take a look at that in a little more depth.

90 Comments

View All Comments

djc208 - Wednesday, September 10, 2008 - link

"I know the point of the article maybe to compare the various GPUs as fairly as possible but these aren't real world figures because I think you'd be hard pressed to find someone in the real world who will use a budget GPU with an ultra high-end CPU."The testing is to compare what the card is capable of. If you pair it with lower end components then you introduce more variability into the mix. Is the game CPU or GPU limited at that resolution? Since no current games really use a quad core CPU then matching the number of cores is less important than matching the architecture and speed of the test CPU.

Plus if you run similar tests on your system with your CPU you can use this data to figure out where you're best off spending upgrade money in the future. If you can't get the same FPS at the same resolution then a CPU upgrade is going to help more than another GPU upgrade.

Gastrian - Wednesday, September 10, 2008 - link

I should have worded it slightly different, when we upgrade we buy a new base unit and pass the older unit on to someone else so comparing it in that way wouldn't work as it will be a new CPU and GPU.The entire base units we were pricing up were half the price of the CPU used in this test and those base units include the AMD 4850 on a DDR2 motherboard.

If you look at some of the Phenom reviews running Crysis (latest CPU reviews with benchmarks), they don't include the Q9770 which is running at 3.2GHz but do have the E8400 3.0GHz intel chip beating a X2 5600+ 2.8GHz AMD chip by almost 20FPS at 1024x768 at medium settings and the Intel chip is £50 more expensive.

The review says this GPU will play Crysis but if you put it with a CPU aimed at the same market segment it won't, therefore the benchmarks paint completely the wrong picture as the 4650 may not be suitable for a budget system and you'd be better off paying the extra and getting a 4850.

Spacecomber - Wednesday, September 10, 2008 - link

If a 4650 is significantly throttled by the CPU in a system, it usually won't make a big difference to go to a faster GPU to try to get around this. At the lower resolutions, they'll both be waiting on the CPU. In other words, neither card is in an environment where it can fully utilize its capabilities.toyota - Wednesday, September 10, 2008 - link

that doesnt even make sense. you dont go with a faster gpu to overcome cpu limitations. if you play Crysis on medium at 1024 with a really slow cpu adding a fast gpu wont make much differnce. it will allow you to bump up settings and res though.Gastrian - Wednesday, September 10, 2008 - link

I wouldn't call an AMD X2 5600+ a "really slow CPU", especially for about £55.It specifically states in the article;

"Additionally, for the 4670, only two of these numbers are less than playable and one is borderline. In general, the 4670 shows that at 1280x1024, it can handle all the current games you might throw at it. In the tests where AA is enabled, the 4670 shows that it has an added advantage, which matters much more at low resolutions than high ones."

The 9600GSO is a rebranded 8800GS which is a slightly underpowered 8800GT.

In the Crysis benchmark with the Q9770 the 9600GSO gets over 60FPS at 1024 x 768. In the same benchmark for the Phenom X3 review, with a slightly more powerful GPU, the AMD X2 5600+ only gets 45 FPS, thats 75% of the performance. At the 1280x1024 Crysis benchmark with 4xAA the 4670 is only able to get around 30fps, now if we apply the 75% performance of the X2 5600+ the AMD 4670 is only getting just over 20fps which is not exactly playable.

By using the Q9770 in the review Anandtech have given all these cards a performance increase they wouldn't get in the real world. This is okay in most reviews as you are just comparing cards but at the bottom end you are also making sure that they don't go below a certain level of performance, this review shows them being above that line while actual users may experience something completely different.

derek85 - Saturday, September 13, 2008 - link

The main point of using a fast CPU is to eliminate the possible bottleneck from CPU, thus comparing purely the performance of GPU. If you use a CPU that would introduce a bottleneck during benchmark you would get same FPS from multiple GPUs not knowing which one is better, and this defeats the whole purpose of a review.pattycake0147 - Wednesday, September 10, 2008 - link

I'd just like to let you know that I feel the same way you do. IMO there should be a lower end CPU (X2 5600) to show some numbers closer to what you or I might experience, as well as a higher end part (Q9770) to show a less CPU limited environment. Unfortunately AT doesn't cater to those of us who would like some more realistic numbers.JarredWalton - Thursday, September 11, 2008 - link

It has nothing to do with "realistic"... it's all about time. If it takes late nights just to get one set of numbers finished, doubling the number of tests means you'll miss the launch dates of pretty much every new piece of hardware - or you'll cut tests to make it. There are only so many hours in a week, and that's often what you have to complete testing of a new GPU.Besides, if a top-end CPU to avoid bottlenecks isn't good enough and you add one other CPU, then someone else wants yet another point of reference, and it just goes from there. We have CPU articles where the GPU is kept constant so you can see CPU scaling, and we have GPU articles where the CPU is constant so you can see GPU scaling. Most games are GPU limited, particularly at high detail settings, but I'd still go with a faster CPU if you can afford it.

Gastrian - Wednesday, September 10, 2008 - link

But that is the environment its going to be placed in.All this review shoes is that a Q9770 can play Crysis with very little concern for the GPU and that the 4670 is better than a 9600GSO but that ignores the issue that neither the 4670 or 9600GSO may play games.

Building on a budget I have to ask myself a simple question, is it worth spending xx amount on a GPU that will give me a performance boost but not still enough to adequately play games or do I spend the same amount on a more powerful CPU and stick with an IGP like the AMD 780G. Neither will play games BUT the more powerful CPU will be more useful in better areas. This doesn't answer that question and I'm still none the wiser as to whether to buy this card or not.

djfourmoney - Wednesday, September 10, 2008 - link

I agree the conclusion is a bit like sour grapes.I had a HD3870 for $119, that cheap IMHO no rebate needed to get that price but after tax it grew to $129 and for the amount of performance I got for that price, I was impressed -

GRID Demo 1.1 50+fps@1920x1200 Ultra AAx2

However, I had to remove a TV Tuner card to use it. So I took it back, I like to watch local HD broadcast of NCCA College Football and NFL Football too much to leave that tuner out.

Yes I could go with a 9600GT single slot (No GSO here) for $99-150 on New Egg

Or a Sapphire HD3850 for $94

But the HD4670 is $79 and shouldn't be more than that, even at B&M's and might be slightly less online depending on bulk purchases by companies like TigerDirect and New Egg.

It fits the bill, yes I have to take down my default resolution from 1920x1200 in GRID to say 1280x768 which is 720p and at that resolution it should play at 50-60fps on High, AAx2. GRID looks so good even at medium detail, I don't mind turning it down a notch or two just to make it run faster. If I can run it 60fps at 1920x1200 in Medium, that's plenty for me.

I'm not uninformed or uneducated about PC Products, far from it. I know the HD4850 1GB from Sapphire would be highly ideal in my system but I don't want to spend $200 for a card, okay? Why should I hold myself to the standards of somebody else when I'm not a hardcore gamer/overclocker at heart, just somebody that wants to play a cool game like GRID at a decent frame rate and detail. While it would be great to get more performance, you would have to go up to a HD3870 to really out distance the HD4670 and as I told you with this mATX board it just won't work and allow my two TV tuners to live in harmony.

Thus HD4670 does what I needed to do and its not because I'm a cheap SOB or uninformed. I am informed, making this an ideal choice for me at my pre-set budget limit.

You should updated this with a pair of these Crossfire'ed I understand performance is pretty good for a couple of "budget" cards....