AMD Radeon HD 4670: Ruling from Top to Bottom

by Derek Wilson on September 10, 2008 12:00 AM EST- Posted in

- GPUs

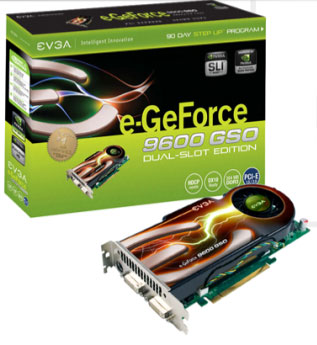

Enter the 8800 GS ... err ... I Mean The 9600 GSO

Recently, we tested the 9500 GT, which is really just a slightly overclocked, die shrunk version of the 8600 GT. We do see that kind of thing as newer models get pushed out, and it makes economic sense. If you can die shrink something and sell it for the same price and a little more performance, you'll make more money. There have been times where we've seen the specs of a part change and the name stays the same, which is a little annoying, but we also get why that happens.

But this is a little extreme. The 9600 GSO is an 8800 GS with a different sticker. Yep, that's it. Same GPU, same board, same everything. The name is the only difference. I don't think I could manage enough sarcasm to even try and make fun of this one properly. Sorry.

Anyway, the 9600 GSO is a $90-$110 part. Sure you can spend even more if you want an overclocked version, but this is the general range. So why are we looking at this for a $70-$80 price range review? Well, it's not that much more expensive, really, and that hasn't stopped us from including things in the past. Especially because, at these prices, spending just a little bit more gets you much much more for you money (usually). Since we already know the 9500 GT is a little under powered for its price point, we wanted to see what else NVIDIA had up their sleeve in the price vicinity.

There is the added complication that a 9600 GT can be had for about $100 as well. There is already a lot of data here and we don't want to go cluttering up our charts with cards that aren't really in the same price class (yes this is ~20% more expensive than the 4670 suggested pricing). The 9600 GT, though, is fairly competitive with the 3870 which we do include for an architectural reference. Based on this, we can talk about the relative value fairly easily.

The prices on sub $100 market hardware are volatile, and fairly close together. Honestly, as is generally the case, we'd rather spend just a little bit more money and get a lot more value. But at some point there needs to be a cut off, so we'll still look at who comes out on top in the $70 - $80 space and we'll also try to talk about whether that's good enough to save the extra cash.

Either way it is really important to emphasize that people need to look at current pricing when they are buying hardware. Things fluctuate a lot in the market, and we are going to report as many relevant performance numbers as time allows. Take performance and the best price you can find at the time and factor them both into your decision. While our conclusions on relative value may be most relevant close to the time they are published, there will always be deals to be had that change things up. Currently there are some mail-in rebate offers that make the 9600 GSO more price competitive with the 4670, so don't forget to shop around.

Is Antialiasing the Killer App?

We tend to only touch briefly on antialiasing on the low end, more as a side show than for any serious purpose. Many older games can run on lower end hardware with AA enabled, but most newer games tend to chug to a halt if any decent level of quality has been enabled alongside AA. Will this launch be any different?

Back when we first looked at AMD's new RV7xx architecture, we noted quite a large improvement in antialiasing performance over their previous generation. Part of this, of course, is due to the major issues R6xx and RV6xx hardware had with antialiasing performance. Yet still, we felt it quite important to do a little deeper digging to find out if there was some possibility that up to 1280x1024 the 4670 might be able to run with 4xAA enabled in games.

Why do we care about AA on this hardware? Well, in spite of the fact that performing antialiasing adds a lot of overhead, the quality benefit is most apparent (and important) at lower resolutions. The larger a pixel is on the screen, the more aliased (jagged) edges look. It's easy to understand when we think about building blocks: if I build the same castle out of the huge toddler sized duplo blocks and standard lego blocks, one is going to look a lot more natural and smooth than the other. Antialiasing would be kind of like making the corners of some blocks a little bit transparent. This doesn't really have a real world analog, but I think that's the best way to get it across. The point is that the castle that already looks pretty smooth will look a little smoother, while the really blocky looking castle will look a lot smoother.

Small rabbit hole here: the real long-term solution to image quality is not AA, it is increasing DPI (dots per inch). Decreasing the size of a pixel will do a lot more to make an image look smooth than any amount of antialiasing could. What's the analog in the real world? Compare those duplo and lego castles to a sand castle. Many more grains of sand that are much smaller mean a very very smooth appearance with no AA needed. Display technology has severely fallen short over the past few years and we still don't have desktop LCD panels that really compete with top of the line CRTs from 7 or 8 years ago.

Anyway, the point is that if these cards that can't run at very high resolutions are paired with a low resolution monitor (say 1024x768 or 1280x1024), we would really see some benefit from enabling AA due to the large pixel sizes. The feature is more important here than at the high end, and we could get a significantly better experience on this hardware if we had the benefit of AA. The question is: can the improvements that AMD made to their AA hardware translate into large enough performance gains in the 4670 over competing hardware to justify the use of antialiasing in games?

Let's keep an eye out for answers as we look at our test results.

90 Comments

View All Comments

djc208 - Wednesday, September 10, 2008 - link

"I know the point of the article maybe to compare the various GPUs as fairly as possible but these aren't real world figures because I think you'd be hard pressed to find someone in the real world who will use a budget GPU with an ultra high-end CPU."The testing is to compare what the card is capable of. If you pair it with lower end components then you introduce more variability into the mix. Is the game CPU or GPU limited at that resolution? Since no current games really use a quad core CPU then matching the number of cores is less important than matching the architecture and speed of the test CPU.

Plus if you run similar tests on your system with your CPU you can use this data to figure out where you're best off spending upgrade money in the future. If you can't get the same FPS at the same resolution then a CPU upgrade is going to help more than another GPU upgrade.

Gastrian - Wednesday, September 10, 2008 - link

I should have worded it slightly different, when we upgrade we buy a new base unit and pass the older unit on to someone else so comparing it in that way wouldn't work as it will be a new CPU and GPU.The entire base units we were pricing up were half the price of the CPU used in this test and those base units include the AMD 4850 on a DDR2 motherboard.

If you look at some of the Phenom reviews running Crysis (latest CPU reviews with benchmarks), they don't include the Q9770 which is running at 3.2GHz but do have the E8400 3.0GHz intel chip beating a X2 5600+ 2.8GHz AMD chip by almost 20FPS at 1024x768 at medium settings and the Intel chip is £50 more expensive.

The review says this GPU will play Crysis but if you put it with a CPU aimed at the same market segment it won't, therefore the benchmarks paint completely the wrong picture as the 4650 may not be suitable for a budget system and you'd be better off paying the extra and getting a 4850.

Spacecomber - Wednesday, September 10, 2008 - link

If a 4650 is significantly throttled by the CPU in a system, it usually won't make a big difference to go to a faster GPU to try to get around this. At the lower resolutions, they'll both be waiting on the CPU. In other words, neither card is in an environment where it can fully utilize its capabilities.toyota - Wednesday, September 10, 2008 - link

that doesnt even make sense. you dont go with a faster gpu to overcome cpu limitations. if you play Crysis on medium at 1024 with a really slow cpu adding a fast gpu wont make much differnce. it will allow you to bump up settings and res though.Gastrian - Wednesday, September 10, 2008 - link

I wouldn't call an AMD X2 5600+ a "really slow CPU", especially for about £55.It specifically states in the article;

"Additionally, for the 4670, only two of these numbers are less than playable and one is borderline. In general, the 4670 shows that at 1280x1024, it can handle all the current games you might throw at it. In the tests where AA is enabled, the 4670 shows that it has an added advantage, which matters much more at low resolutions than high ones."

The 9600GSO is a rebranded 8800GS which is a slightly underpowered 8800GT.

In the Crysis benchmark with the Q9770 the 9600GSO gets over 60FPS at 1024 x 768. In the same benchmark for the Phenom X3 review, with a slightly more powerful GPU, the AMD X2 5600+ only gets 45 FPS, thats 75% of the performance. At the 1280x1024 Crysis benchmark with 4xAA the 4670 is only able to get around 30fps, now if we apply the 75% performance of the X2 5600+ the AMD 4670 is only getting just over 20fps which is not exactly playable.

By using the Q9770 in the review Anandtech have given all these cards a performance increase they wouldn't get in the real world. This is okay in most reviews as you are just comparing cards but at the bottom end you are also making sure that they don't go below a certain level of performance, this review shows them being above that line while actual users may experience something completely different.

derek85 - Saturday, September 13, 2008 - link

The main point of using a fast CPU is to eliminate the possible bottleneck from CPU, thus comparing purely the performance of GPU. If you use a CPU that would introduce a bottleneck during benchmark you would get same FPS from multiple GPUs not knowing which one is better, and this defeats the whole purpose of a review.pattycake0147 - Wednesday, September 10, 2008 - link

I'd just like to let you know that I feel the same way you do. IMO there should be a lower end CPU (X2 5600) to show some numbers closer to what you or I might experience, as well as a higher end part (Q9770) to show a less CPU limited environment. Unfortunately AT doesn't cater to those of us who would like some more realistic numbers.JarredWalton - Thursday, September 11, 2008 - link

It has nothing to do with "realistic"... it's all about time. If it takes late nights just to get one set of numbers finished, doubling the number of tests means you'll miss the launch dates of pretty much every new piece of hardware - or you'll cut tests to make it. There are only so many hours in a week, and that's often what you have to complete testing of a new GPU.Besides, if a top-end CPU to avoid bottlenecks isn't good enough and you add one other CPU, then someone else wants yet another point of reference, and it just goes from there. We have CPU articles where the GPU is kept constant so you can see CPU scaling, and we have GPU articles where the CPU is constant so you can see GPU scaling. Most games are GPU limited, particularly at high detail settings, but I'd still go with a faster CPU if you can afford it.

Gastrian - Wednesday, September 10, 2008 - link

But that is the environment its going to be placed in.All this review shoes is that a Q9770 can play Crysis with very little concern for the GPU and that the 4670 is better than a 9600GSO but that ignores the issue that neither the 4670 or 9600GSO may play games.

Building on a budget I have to ask myself a simple question, is it worth spending xx amount on a GPU that will give me a performance boost but not still enough to adequately play games or do I spend the same amount on a more powerful CPU and stick with an IGP like the AMD 780G. Neither will play games BUT the more powerful CPU will be more useful in better areas. This doesn't answer that question and I'm still none the wiser as to whether to buy this card or not.

djfourmoney - Wednesday, September 10, 2008 - link

I agree the conclusion is a bit like sour grapes.I had a HD3870 for $119, that cheap IMHO no rebate needed to get that price but after tax it grew to $129 and for the amount of performance I got for that price, I was impressed -

GRID Demo 1.1 50+fps@1920x1200 Ultra AAx2

However, I had to remove a TV Tuner card to use it. So I took it back, I like to watch local HD broadcast of NCCA College Football and NFL Football too much to leave that tuner out.

Yes I could go with a 9600GT single slot (No GSO here) for $99-150 on New Egg

Or a Sapphire HD3850 for $94

But the HD4670 is $79 and shouldn't be more than that, even at B&M's and might be slightly less online depending on bulk purchases by companies like TigerDirect and New Egg.

It fits the bill, yes I have to take down my default resolution from 1920x1200 in GRID to say 1280x768 which is 720p and at that resolution it should play at 50-60fps on High, AAx2. GRID looks so good even at medium detail, I don't mind turning it down a notch or two just to make it run faster. If I can run it 60fps at 1920x1200 in Medium, that's plenty for me.

I'm not uninformed or uneducated about PC Products, far from it. I know the HD4850 1GB from Sapphire would be highly ideal in my system but I don't want to spend $200 for a card, okay? Why should I hold myself to the standards of somebody else when I'm not a hardcore gamer/overclocker at heart, just somebody that wants to play a cool game like GRID at a decent frame rate and detail. While it would be great to get more performance, you would have to go up to a HD3870 to really out distance the HD4670 and as I told you with this mATX board it just won't work and allow my two TV tuners to live in harmony.

Thus HD4670 does what I needed to do and its not because I'm a cheap SOB or uninformed. I am informed, making this an ideal choice for me at my pre-set budget limit.

You should updated this with a pair of these Crossfire'ed I understand performance is pretty good for a couple of "budget" cards....