NVIDIA's Bumpy Ride: A Q4 2009 Update

by Anand Lal Shimpi on October 14, 2009 12:00 AM EST- Posted in

- GPUs

Blhaflhvfa.

There’s a lot to talk about with regards to NVIDIA and no time for a long intro, so let’s get right to it.

At the end of our Radeon HD 5850 Review we included this update:

“Update: We went window shopping again this afternoon to see if there were any GTX 285 price changes. There weren't. In fact GTX 285 supply seems pretty low; MWave, ZipZoomFly, and Newegg only have a few models in stock. We asked NVIDIA about this, but all they had to say was "demand remains strong". Given the timing, we're still suspicious that something may be afoot.”

Less than a week later and there were stories everywhere about NVIDIA’s GT200b shortages. Fudo said that NVIDIA was unwilling to drop prices low enough to make the cards competitive. Charlie said that NVIDIA was going to abandon the high end and upper mid range graphics card markets completely.

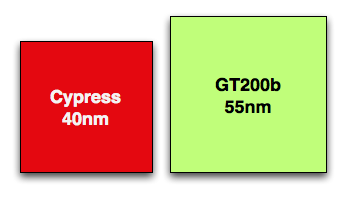

Let’s look at what we do know. GT200b has around 1.4 billion transistors and is made at TSMC on a 55nm process. Wikipedia lists the die at 470mm^2, that’s roughly 80% the size of the original 65nm GT200 die. In either case it’s a lot bigger and still more expensive than Cypress’ 334mm^2 40nm die.

Cypress vs. GT200b die sizes to scale

NVIDIA could get into a price war with AMD, but given that both companies make their chips at the same place, and NVIDIA’s costs are higher - it’s not a war that makes sense to fight.

NVIDIA told me two things. One, that they have shared with some OEMs that they will no longer be making GT200b based products. That’s the GTX 260 all the way up to the GTX 285. The EOL (end of life) notices went out recently and they request that the OEMs submit their allocation requests asap otherwise they risk not getting any cards.

The second was that despite the EOL notices, end users should be able to purchase GeForce GTX 260, 275 and 285 cards all the way up through February of next year.

If you look carefully, neither of these statements directly supports or refutes the two articles above. NVIDIA is very clever.

NVIDIA’s explanation to me was that current GPU supplies were decided on months ago, and in light of the economy, the number of chips NVIDIA ordered from TSMC was low. Demand ended up being stronger than expected and thus you can expect supplies to be tight in the remaining months of the year and into 2010.

Board vendors have been telling us that they can’t get allocations from NVIDIA. Some are even wondering whether it makes sense to build more GTX cards for the end of this year.

If you want my opinion, it goes something like this. While RV770 caught NVIDIA off guard, Cypress did not. AMD used the extra area (and then some) allowed by the move to 40nm to double RV770, not an unpredictable move. NVIDIA knew they were going to be late with Fermi, knew how competitive Cypress would be, and made a conscious decision to cut back supply months ago rather than enter a price war with AMD.

While NVIDIA won’t publicly admit defeat, AMD clearly won this round. Obviously it makes sense to ramp down the old product in expectation of Fermi, but I don’t see Fermi with any real availability this year. We may see a launch with performance data in 2009, but I’d expect availability in 2010.

While NVIDIA just launched its first 40nm DX10.1 parts, AMD just launched $120 DX11 cards

Regardless of how you want to phrase it, there will be lower than normal supplies of GT200 cards in the market this quarter. With higher costs than AMD per card and better performance from AMD’s DX11 parts, would you expect things to be any different?

Things Get Better Next Year

NVIDIA launched GT200 on too old of a process (65nm) and they were thus too late to move to 55nm. Bumpgate happened. Then we had the issues with 40nm at TSMC and Fermi’s delays. In short, it hasn’t been the best 12 months for NVIDIA. Next year, there’s reason to be optimistic though.

When Fermi does launch, everything from that point should theoretically be smooth sailing. There aren’t any process transitions in 2010, it’s all about execution at that point and how quickly can NVIDIA get Fermi derivatives out the door. AMD will have virtually its entire product stack out by the time NVIDIA ships Fermi in quantities, but NVIDIA should have competitive product out in 2010. AMD wins the first half of the DX11 race, the second half will be a bit more challenging.

If anything, NVIDIA has proved to be a resilient company. Other than Intel, I don’t know of any company that could’ve recovered from NV30. The real question is how strong will Fermi 2 be? Stumble twice and you’re shaken, do it a third time and you’re likely to fall.

106 Comments

View All Comments

Scali - Sunday, October 18, 2009 - link

If you sort by percentage change:http://store.steampowered.com/hwsurvey/videocard/?...">http://store.steampowered.com/hwsurvey/videocard/?...

Then add up all the increases from nVidia, you get to +1.42%.

Do the same for all ATi parts, and you get +1.06%.

(Obviously you can't do a direct comparison of the 260 to the entire 4800-series, as the 260 is just a single part. You would then ignore various other parts that also compete with the 4800 series, such as the 9800GTX).

shin0bi272 - Thursday, October 15, 2009 - link

It does MIMD processing and parallel kernel processing.true 32bit floating point processing in 1 clock (previous only did 24bit and emulated 32bit) and is now using the IEE754-2008 standard instead of the 1984 standard. so its 64bit or double precision performance is 1/2 that of the single precision now, while their previous was 1/8th as fast and AMD's is 1/5th.

With the addition of a second dispatch unit they are able to send special functions to the sfu at the same time they are sending gp shaders... meaning they no longer have to take up the entire SM (group of shader cores acting as a unit from what I can gather) to do an interpolation calculation.

They added an actual cache hierarchy instead of the software managed memory in the gt200 series which eliminates a large problem they were having which was shared memory size an that means increased performance.

Nvidia says that its switching between cuda and gpu is now 10x faster which means that physx performance will increase dramatically annnnnd it supports parallel transfers to the cpu vs serial in previous cards... so multiple cpu cores and multiple connections to the gpu means much better performance.

Nvidia claims they learned their lesson on moving to different die sizes and guessing on their pricing. They were really pushing the new die with the old 5800fx chip and they got beat bad by ati... conversely they took their time with the gt200's and ended up pricing them according to what they thought the ati 48xx would be and ended up overshooting by a LOT. Whether or not they are full of it remains to be seen here but hopefully we can expect a 450-500 dollar gt300 flagship card early in 2010. The biggest issue is that ati already has boards in production and 1600 simd (single instruction multiple data) cores vs 512 mimd cores in the fermi. The ati card also runs at 850mhz vs probably 650mhz in the nvidia. We will just have to wait and see the benchmarks I guess.

Plus add in the horrible state of the economy (which is only going to get worse when congress goes to do the budget for 2011 in march), the falling value of the dollar, the continuing increase of the unemployment rate, the impending deficit/debt issues, the oil producing countries talking of dumping the dollar, the UN wanting to dump the dollar as the international reserve currency, etc., etc. and Nvidia cutting back on production might not be such a bad idea. If the economy collapses again once the government has to raise the interest rates and taxes to pay for their spending then AMD might be hurting with so much product sitting on the shelves collecting dust.

(fermi data from http://anandtech.com/video/showdoc.aspx?i=3651&...">http://anandtech.com/video/showdoc.aspx?i=3651&...

Scali - Thursday, October 15, 2009 - link

You should put the 1600 SIMD vs 512 SIMD in the proper perspective though...Last generation it was 800 SIMD (HD4870/HD4890) vs 240 SIMD (GTX280/GTX285).

With 1600 vs 512, the ratio actually improves in nVidia's favour, going from 3.33:1 to 3.1:1.

shin0bi272 - Friday, October 16, 2009 - link

Ahh thats true I didnt think about that. With all the other improvements and the move to ddr5 it should be a great card.Zool - Thursday, October 15, 2009 - link

In the worst case scenariou ati has 320 ALUs and in the best case 1600.Nvidia has in best and worst case same 512 ALUs. And the nvidia shaders runs at much higher frequency. Let we say double altough for gt300 the 1700 Mhz shaders wont be too realistic.

So actualy in the worst case scenario the gt300 has more than 3 times the ALUs than the radeon. In the best case scenario radeon has around 60% advantage over nvidia (with the unrealistic shader clocks).

And in the end some instructions may take more clock cycles on the GT300 some on the radeon 5800.

So your perspective is way off.

Scali - Thursday, October 15, 2009 - link

My perspective was purely that the gap in number of SIMDs between AMD becomes smaller with this generation, not larger. It's spot on, as there simply ARE 800 and 240 units in the respective parts I mentioned.Now you can go and argue about clockspeeds and best case/worst case, but that's not going to much good since we don't know any of that information on Fermi. We'll just have to wait for some actual benchmarks.

Zool - Friday, October 16, 2009 - link

The message of my reply was that just plain shader vs shader conclusions are very inaccurate and mean usualy nothing.Scali - Friday, October 16, 2009 - link

I never claimed otherwise. I just pointed out that this particular ratio would move more towards nVidia, as the post I was replying to appeared to assume the opposite, speaking out a concern on the large difference in raw numbers.Zool - Thursday, October 15, 2009 - link

The gt240 doesnt seem to be much better if they follow the gt220 price scenario.link http://translate.google.com/translate?prev=hp&...">http://translate.google.com/translate?p...sl=zh-CN...

The core/shader clocks are prety low for 40nm. Lets hope that gt300 will run on higher than that.

shotage - Thursday, October 15, 2009 - link

Just read this article and found it pretty ensightful, it's focus is the shift in microarchitecture of the GPU - primary focus is on Nvidia and their upcoming Fermi chipset. Very good technical read for those that are interested:http://www.realworldtech.com/page.cfm?ArticleID=RW...">http://www.realworldtech.com/page.cfm?ArticleID=RW...